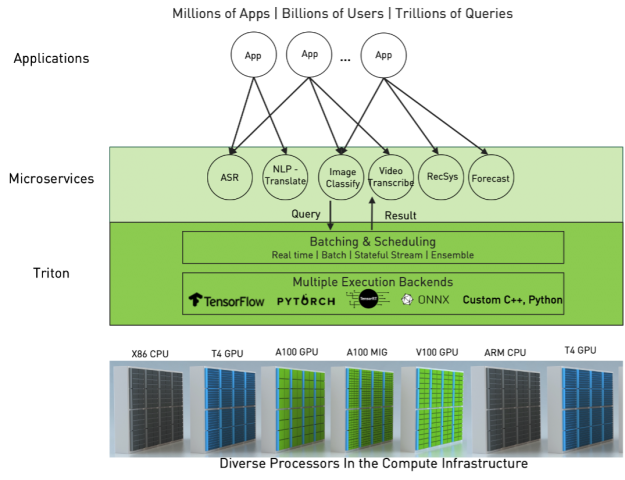

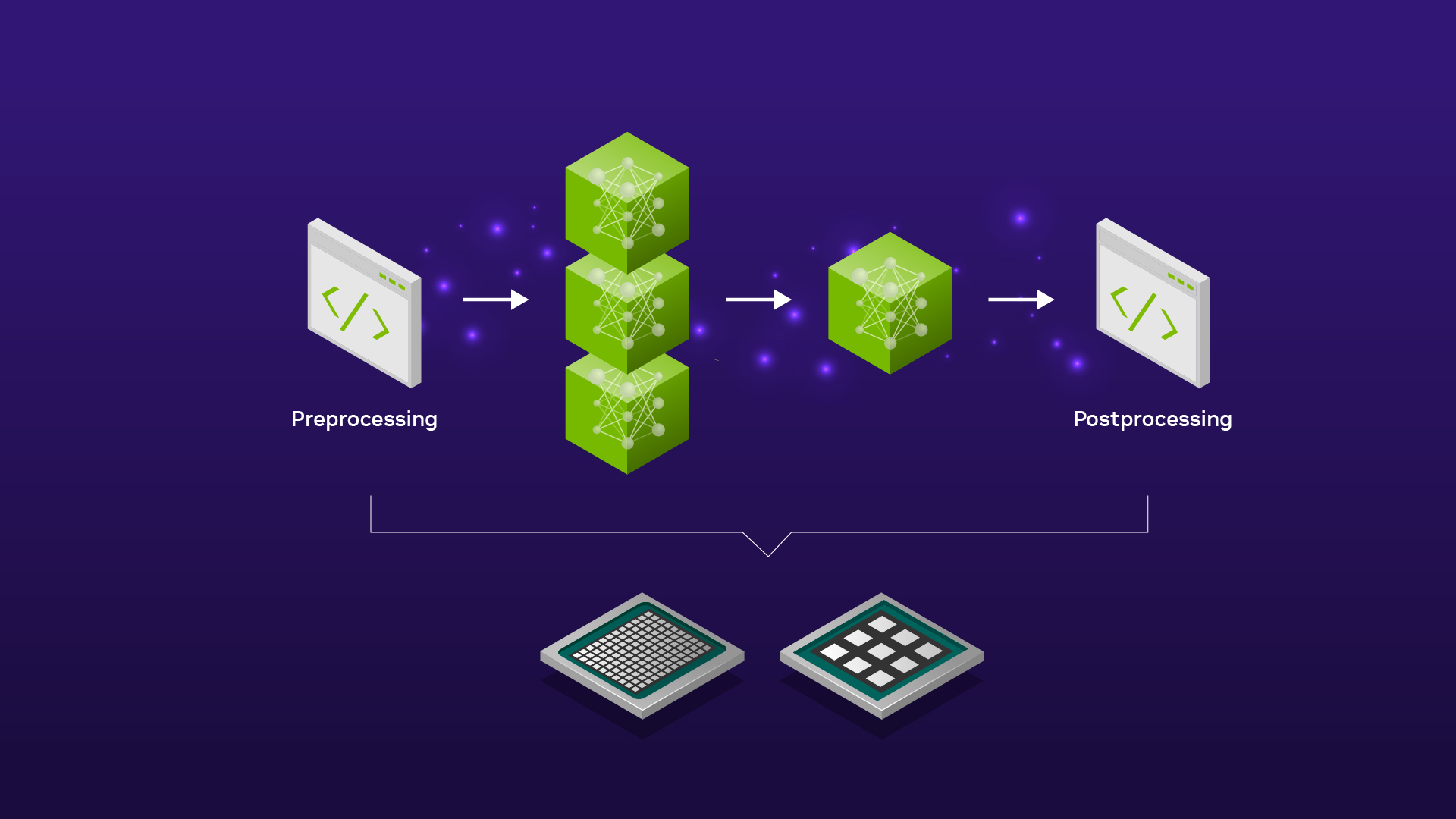

Serving ML Model Pipelines on NVIDIA Triton Inference Server with Ensemble Models | NVIDIA Technical Blog

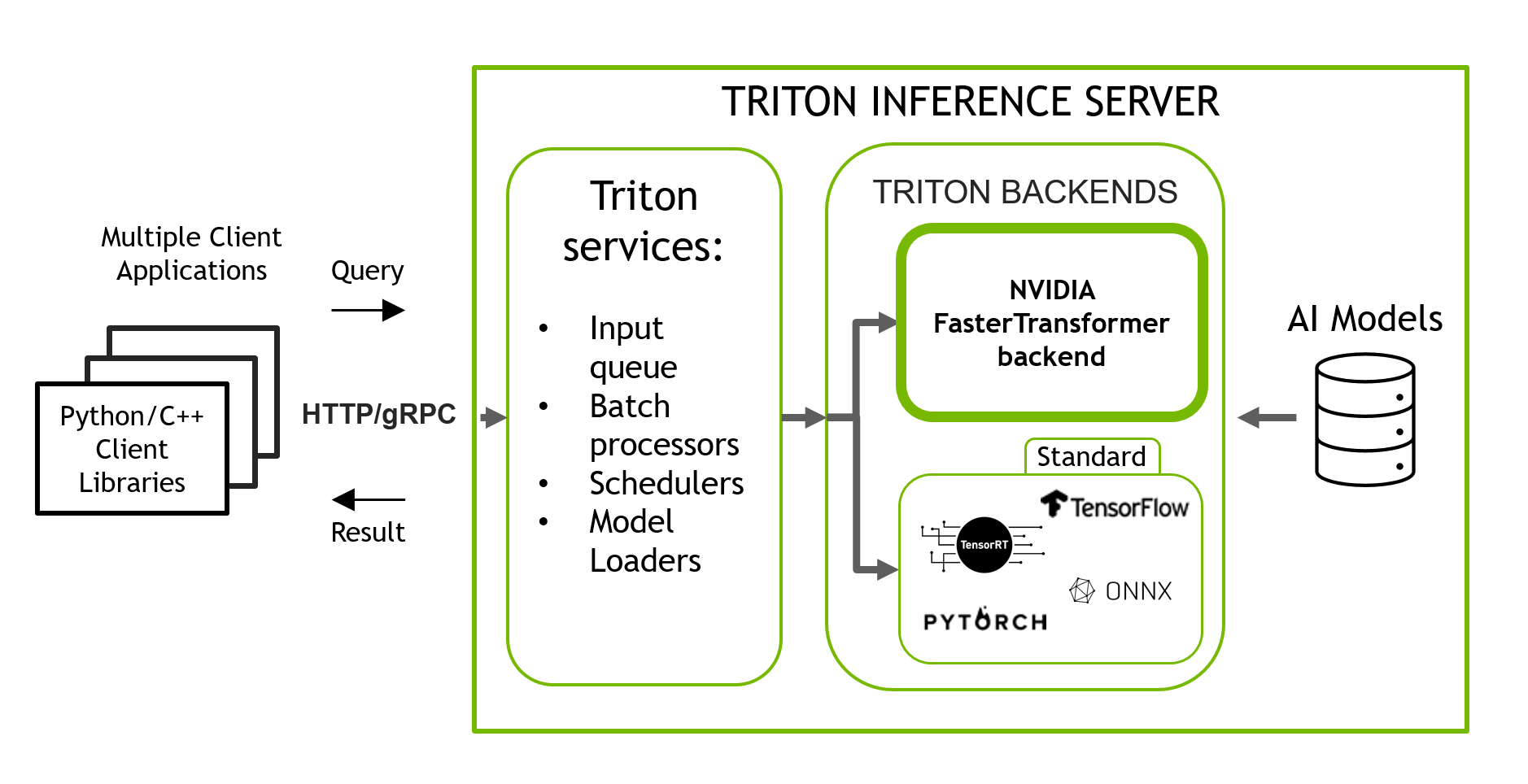

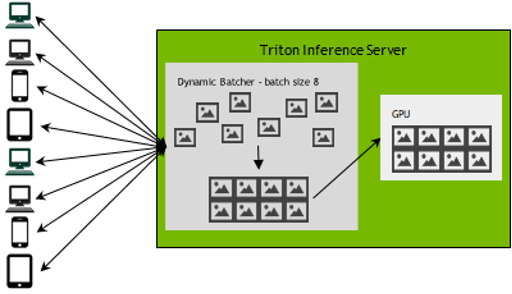

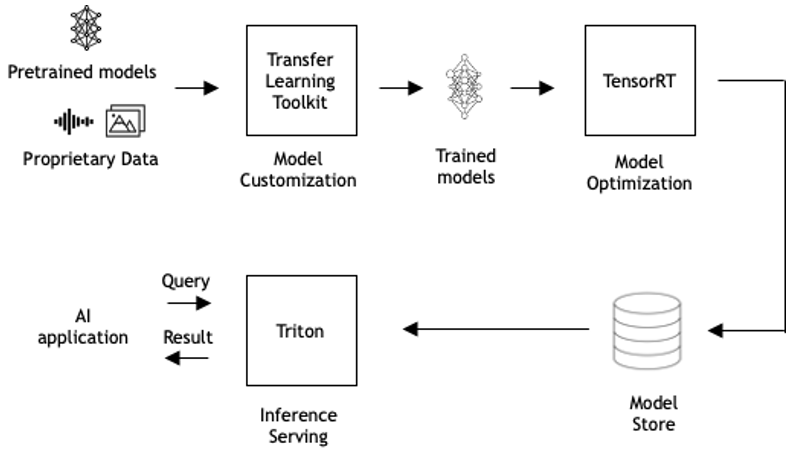

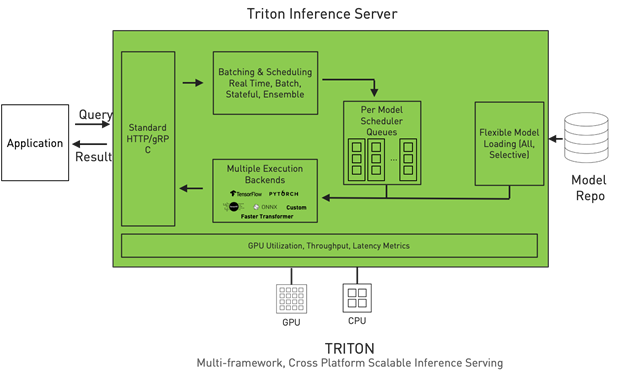

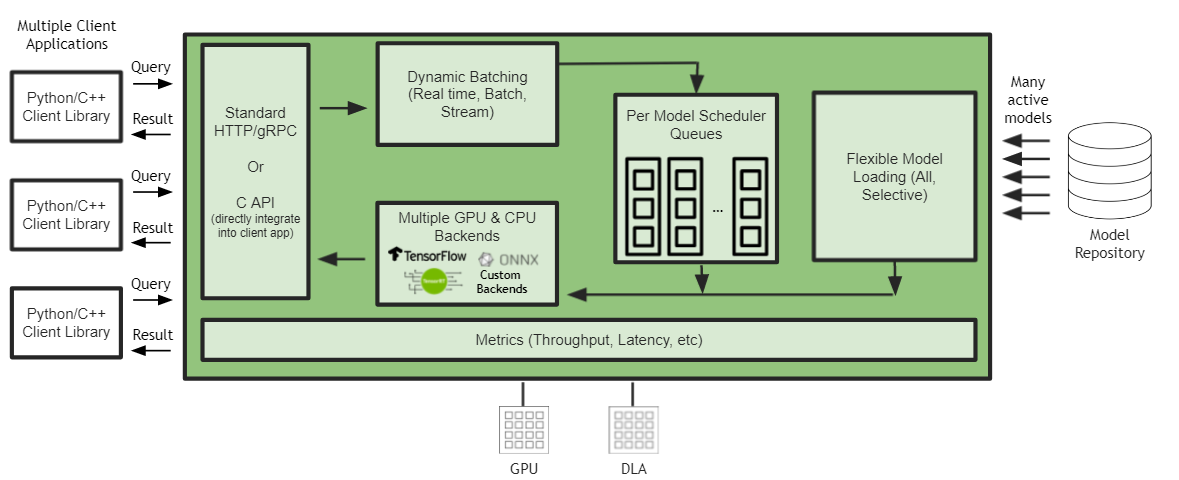

Serving and Managing ML models with Mlflow and Nvidia Triton Inference Server | by Ashwin Mudhol | Medium

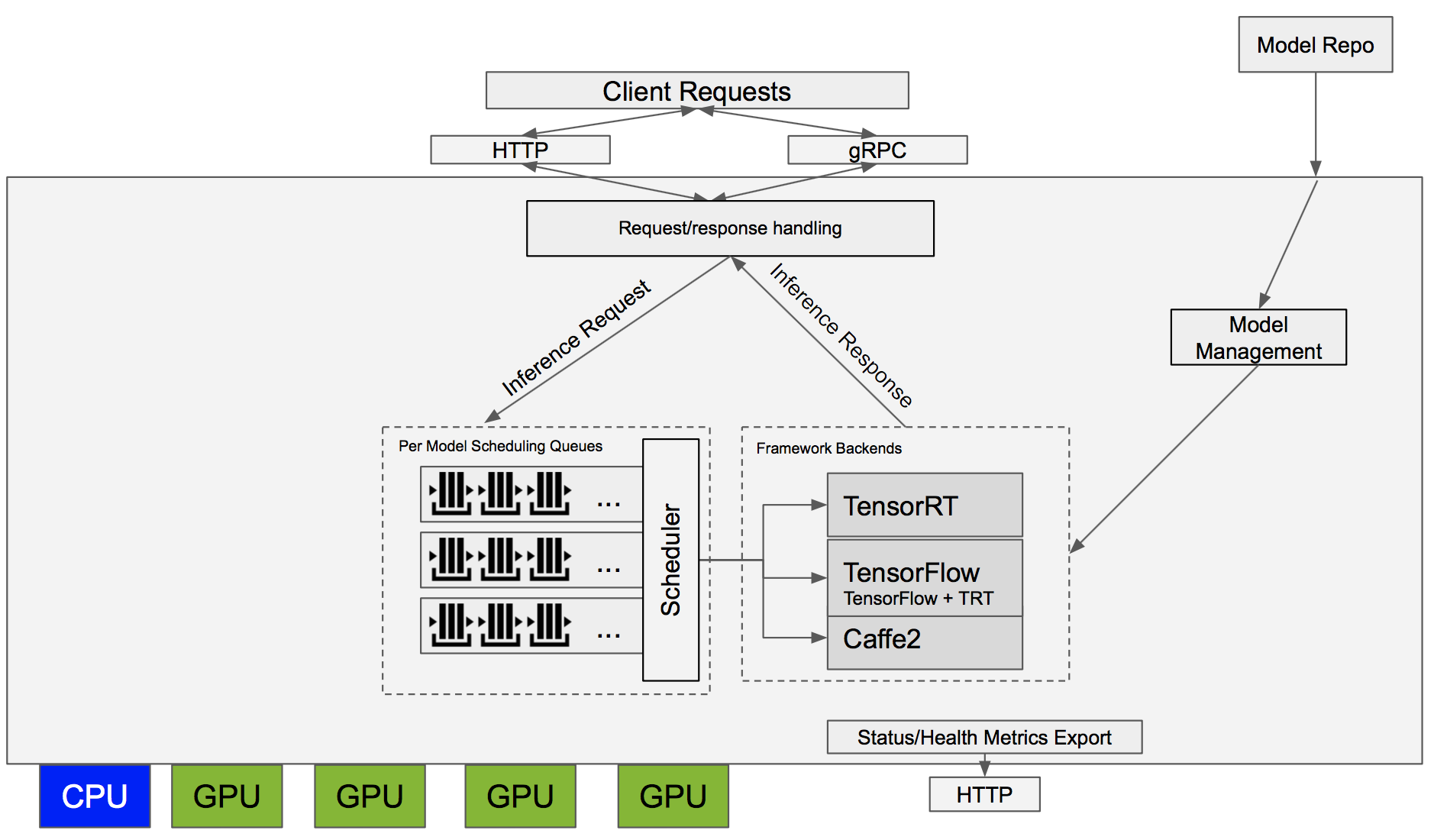

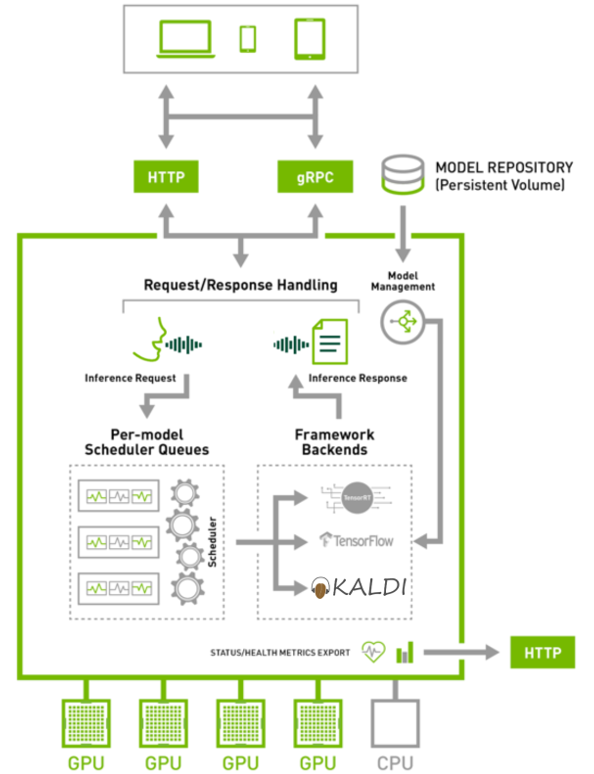

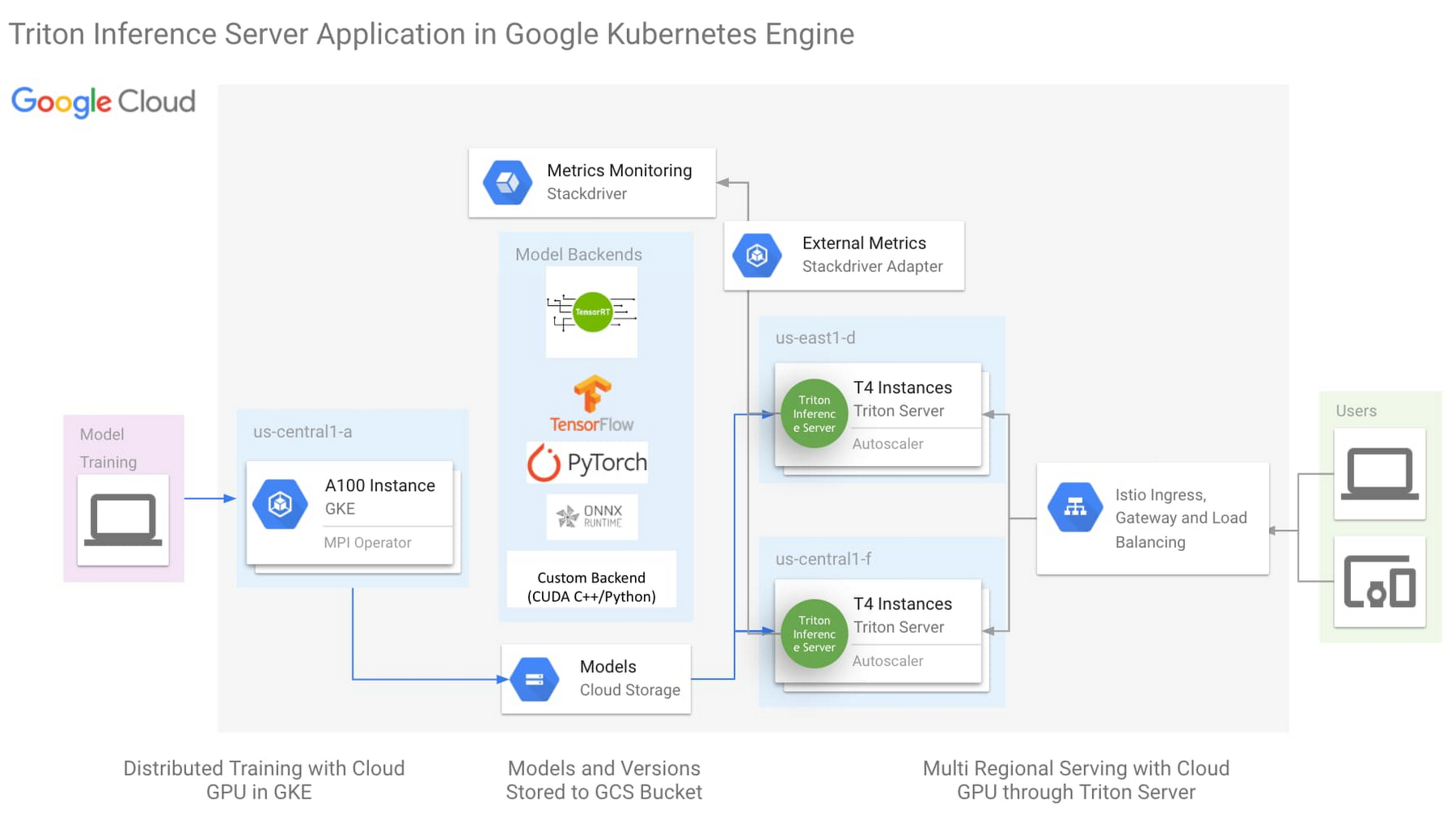

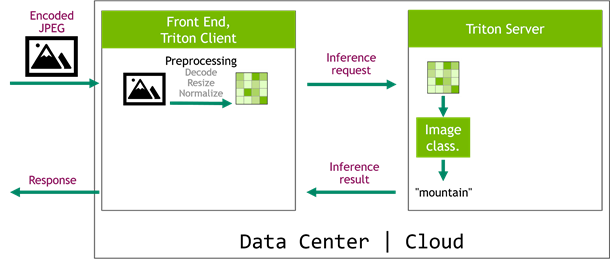

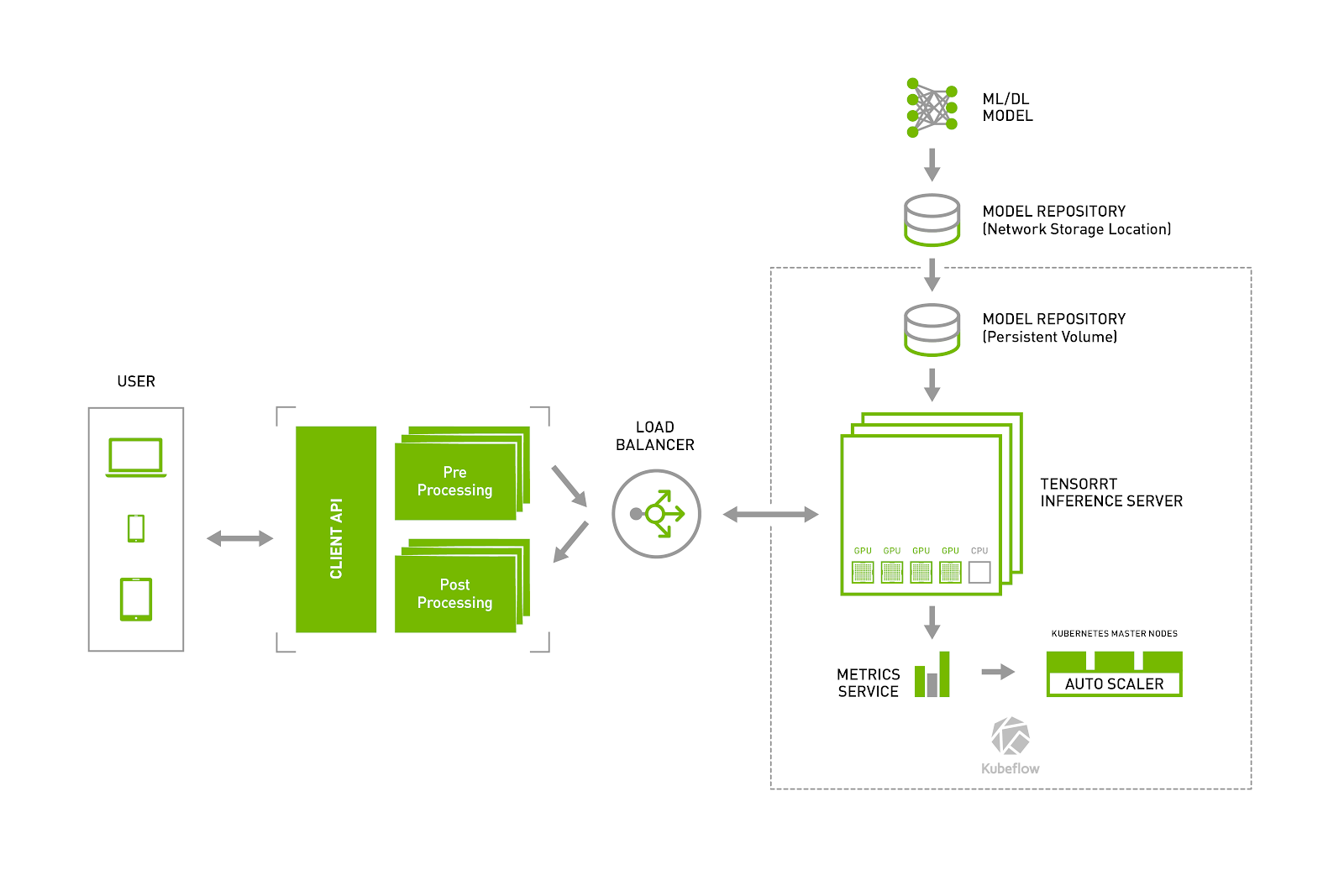

NVIDIA TensorRT Inference Server and Kubeflow Make Deploying Data Center Inference Simple | NVIDIA Technical Blog

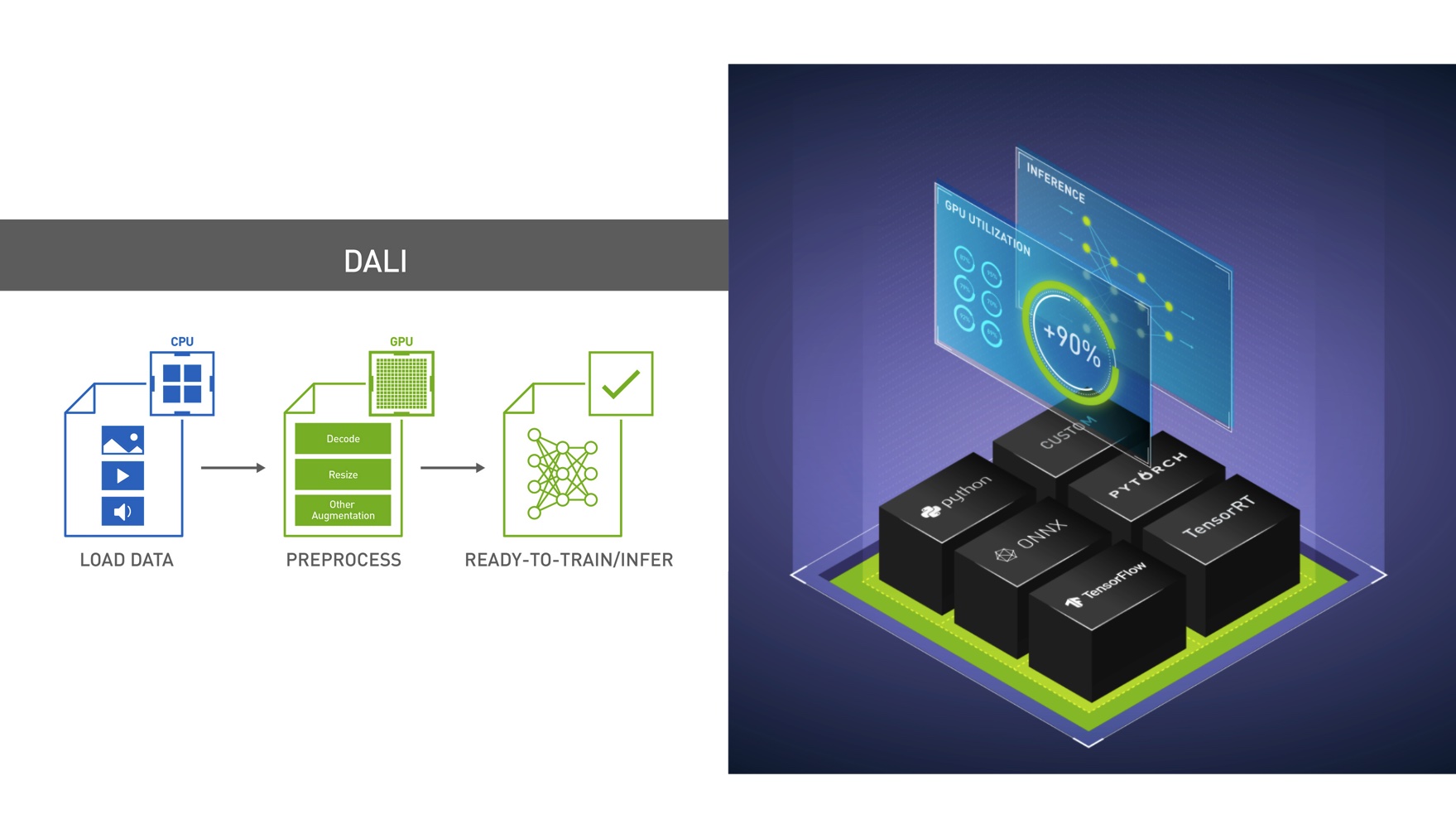

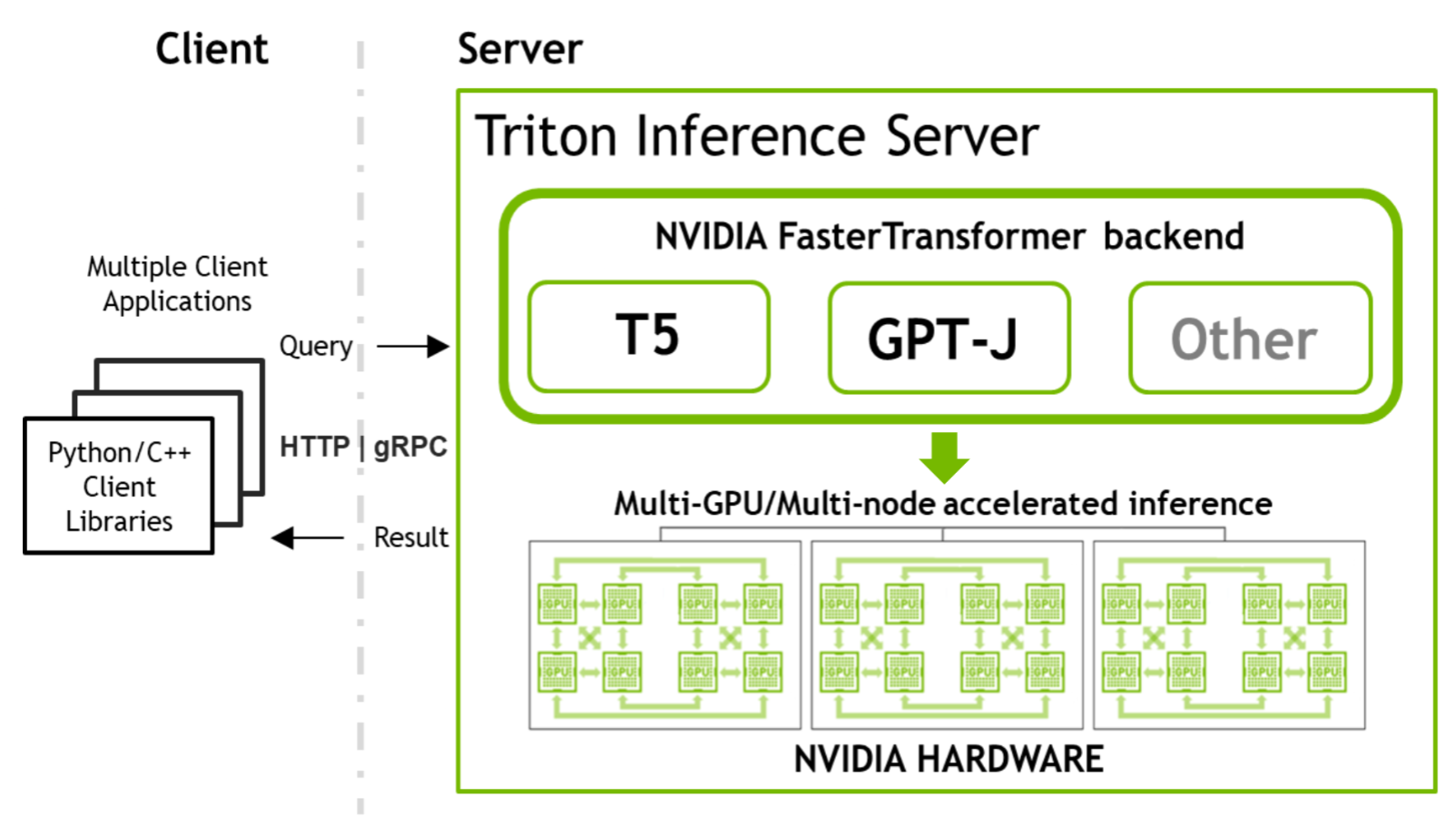

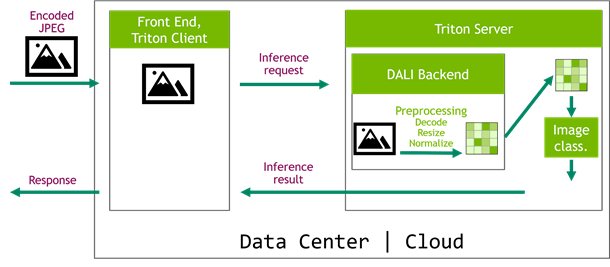

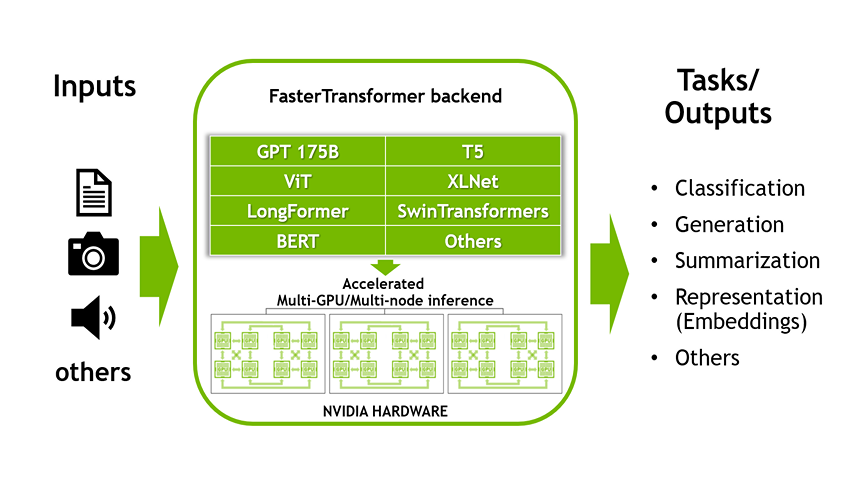

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog